How a hacking group managed to get into Microsoft inboxes | The EU Artificial Intelligence Act

CyberInsights #127 - Russian Midnight Blizzard got access to Microsoft mailboxes | The EU releases the final draft of the AI act that, once again, is paving the way for the rest of the countries

The story of how Midnight Blizzard managed to get into Microsoft mailboxes

It’s simpler than it looks. A series of low risks ignored led to the catastrophe.

Microsoft revealed how hackers managed to get into their mailboxes. It started with a very low-tech password spraying attack.

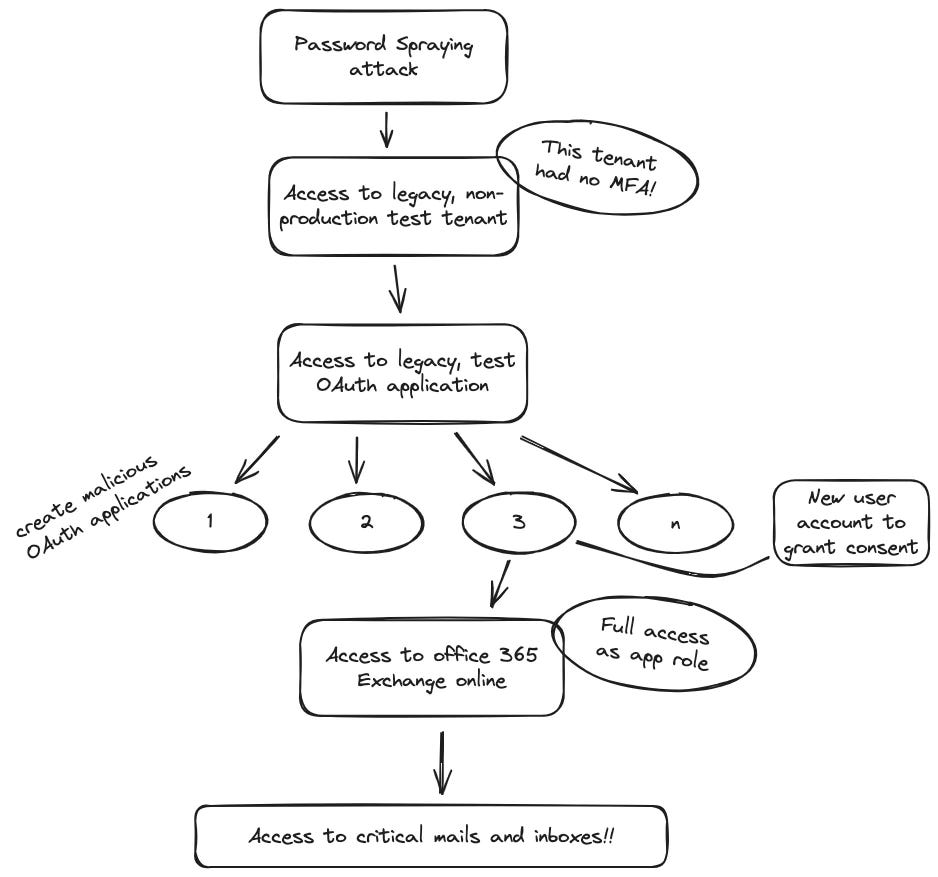

This article covers the detailed story. [LINK]. I have taken the liberty of putting it into a flowchart.

Password spraying provided access to legacy, non production, test tenant. Look at the three keywords here:

Legacy - Old. Not too many people want to touch some IT asset that is old and turned on. You never know what will break.

Non production - Probably used and forgotten. No one bothered to switch off the lights?

Test - Ah! The poor cousin of prod.

With this access, the hackers were able to now login to the next system - legacy, test OAuth app! Legacy and test - right!, but OAuth?

OAuth is an open standard for authorisation. Why leave an authorisation server running? Well, it was a legacy system. And used only for testing. Probably ignored again.

Now the attackers created multiple OAuth applications. When you have access to an authorisation server, you can create authorised applications. They also created a user account to authorise the apps to give access to Office 365 Exchange online (only app access, of course). A user account would probably have raised flags, but an app account? Those can access anything, right? Even auditors ignore service accounts!

And thus, you have access to mailboxes.

Take Action:

To, the CISO at large,

Pray, what keeps you from implementing MFA in your organisation as of yesterday? It’s not the 90s anymore and it’s not the 2000s either.

To the CTO,

Yes, it takes a lot of test systems and applications to get one application running effectively in production, but that does not mean you should allow your devs and testers and haphazardly strew digital debris about the network. Teach your kids to clean up after them!

Final Draft of the EU Artificial Intelligence Act released

The EU aims to do an encore of its successful GDPR act.

The European Union released the final draft of the EU Artificial Intelligence Act.[LINK]

Their pdf summary [LINK] is a good document for your TL;DR.

Some points that I really like about the act:

General purpose AI has to disclose a summary of the content that they used for their learning.

Model evaluation, adversarial testing are mandatory for general purpose AIs

The following AI systems are ‘prohibited’:

using techniques to distort behaviour and impair informed decision making

exploiting vulnerabilities

biometric categorisation systems that infer sensitive data

social scoring

assessing the risks of an individual committing criminal offence (no Minority Report type pre-crime unit, then..)

un-targeted compiling of facial recognition data

inferring emotions in workplaces or educational institutes (wonder why they allowed inferring moods in, say the train.)

real time biometric identification in public places

Then there is a definition of high risk AI systems and some rules on how to go about implementing those.

There is an online compliance checker. [LINK].

Although it does appear hastily crafted and not as thorough as the GDPR, one must remember that the whole AI growth is very recent and imagining possible risks and scenarios is not easy.

Take Action:

If you are building AI based systems, go through these rules and ensure compliance to them. If GDPR is anything to go by, most other AI laws will be poor imitations of this one.

If you work with government agencies, do see if you can get your governments to create and implement laws like this that most governments might see as a hindrance to ‘national security’.